Policymakers are accustomed to thinking in finite measurable terms like laws, budgets, and program implementation. Artificial intelligence, however, no longer advances in a straight line or within the familiar boundaries of public administration. Instead, it seems to accelerate along exponential curves, reshaping expectations faster than institutions can plausibly adapt. To many observers, it feels less like a set of discrete advances and more like an uncontrollable, or even frightening, open-ended growth event. First, I will draw historical comparisons to show how earlier “infinite” growth events, such as industrialization and the rise of the internet, led humanity to create new frameworks for understanding change. Then, I will use those historical parallels to inform how we should think about today’s AI growth event.

Past ‘infinities’ were stabilized by new conceptual frameworks around industrial production and digital communication. We should consider those frameworks in application to the exponential growth of knowledge itself that is being ushered in by Large Language Models (LLMs). The policy challenge ahead is not to restrain progress, but to encourage market mechanisms to allocate capital justly while establishing rules of the road that help policymakers work within this new terrain.

THE PAST

The Industrial Revolution represents the first example of what appeared to be an unrestrained economic growth event. Between 1750 and 1900, the world changed faster than anyone thought possible. The number of people on Earth tripled, the energy used jumped ten times, and factories doubled their output every couple of decades. For centuries, leaders and thinkers had conceptualized the economy as essentially cyclical.[1] Then the growth that came with fossil fuels and interchangeable parts forced us to rethink that conception.

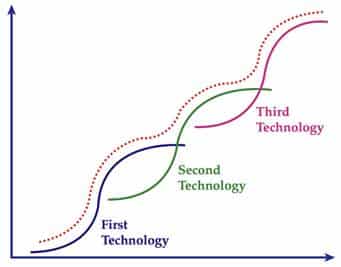

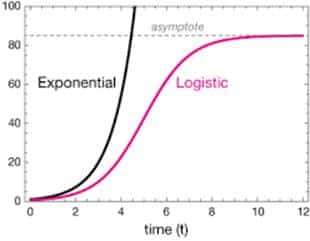

Policymakers met this new scale of growth not by halting it but by inventing abstractions that made it manageable. Time standardization synchronized local hours into a global clock, the metric system replaced regional measures with a universal one, and national income accounting transformed countless transactions into aggregates like GDP, making industrial expansion both comprehensible and administrable. Yet even this apparent infinite growth mirage met its limits. Resource scarcity and diminishing returns imposed natural asymptotes, slowing exponential expansion into a gentler S-curve (see math appendix).

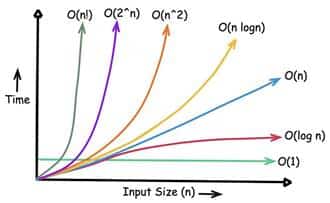

If the Industrial Revolution represented an infinity of matter and energy, the late twentieth century brought about an infinity of information. When computer scientists describe how efficiently an algorithm scales, they use “Big O notation” to characterize how runtime grows with inputs: for example, an O(n) process scales linearly, while O(2ⁿ) scales exponentially. The digital world, however, did not merely grow faster but grew in a way that resembled far steeper complexity classes. From 1980 to 2020, global digital storage capacity expanded by more than a billion-fold, and the number of connected devices followed something closer to a super-exponential curve, approaching O(n!) as each additional node multiplied the number of possible communication pathways. [2] This explosion of connectivity appeared boundless, an information singularity where the constraint shifted from physical scarcity to cognitive overload: how could finite human attention process effectively infinite data? Humanity did not try to halt this growth but shaped it through organizing frameworks. The TCP/IP protocol stack and HTTP standards organized how machines could talk to one another. PageRank[3] (the foundation for Google’s search algorithm) imposed order on chaos by mathematically assessing relevance, making a vast, unstructured internet navigable.

Over time, however, this informational infinity encountered a fundamental limit: human attention.[4] The number of connected devices and data streams continued to grow exponentially, but the hours available for humans to consume remained fixed. We are still amid this information growth event, but its trajectory, too, now bends toward an asymptote. Attention became the bottleneck that no increase in bandwidth or storage could overcome.

Shape of Successive Economic Growth Events

THE PRESENT – EXPONENTIAL AI GROWTH

As artificial intelligence systems become more autonomous, we are entering what some researchers describe as an agentic web, a digital ecosystem where AI agents do not merely respond to human prompts but increasingly interact with and coordinate one another. Bots are not new; they power search engines, automated trading, and online customer service and currently comprise 50% of internet traffic.[5] What is different now is their improved ability to act independently, initiate tasks without human supervision, and even orchestrate other bots to carry out complex sequences of actions. As bots become more effective at communicating and delegating work among themselves, network activity could grow at super-exponential rates O(n!), far faster than traditional systems were designed to handle. This expansion risks crowding out human traffic as automated exchanges compete for bandwidth and computing resources.

One solution is to increase the efficiency of bot-bot communication and develop protocols that let them coordinate more effectively.[6] Another is to scale out physical network resources to handle the explosive increase in traffic such systems could generate.[7] This will require significant investment in digital infrastructure, an area where policy choices are decisive. Policymakers can support this effort by avoiding onerous anti-growth regulations which stifle investment. The role of policy should be to support market mechanisms which allocate capital efficiently, not stand in their way or distort it.

Furthermore, stronger methods are needed to distinguish human activity from automated traffic. Traditional CAPTCHA systems, once a reliable way to verify human presence, are now often easily bypassed by frontier AI models.[8] Governments can support the creation of next-generation verification systems such as voluntary proof-of-personhood credentials (similar to the U.S. military’s Common Access Card), secure hardware-based authentication, or decentralized reputation networks. While bots can act in ways which are beneficial to their human orchestrators, we should ensure that the human-centric internet is not crowded out by noise.

Policymakers should also approach AI safety with an assumption of permanent imperfection. The layers of safeguards built into large language models such as guardrails, red-teaming, alignment mechanisms are helpful but cannot guarantee full security.[9] Even systems held to the highest cybersecurity standards have experienced high-profile hacks. The SolarWinds breach infiltrated multiple U.S. federal agencies, and the 2015 Office of Personnel Management hack compromised sensitive information on government employees. These examples underscore a maxim of cybersecurity: any computer program of non-trivial size will necessarily contain vulnerabilities.[10]

Despite this fundamental insecurity, I propose policymakers do not treat LLMs as inherently dangerous, but rather, think of them through the framework of reliability engineering. Just as aviation evolved from fragile prototypes into the safest form of transport through redundancy, simulation, and continuous monitoring, AI governance can follow a similar path. The goal should be to engineer trust at scale, not prohibit risk altogether. This requires strengthening guardrails that detect and contain harmful outputs such as classified or personally identifiable medical information before it spreads. It also means building systems of redundancy that make occasional failures manageable rather than catastrophic, such as pairing LLM outputs with independent human review in sensitive applications or requiring parallel model evaluations in critical systems to cross-check for hallucinations or bias before real-world actions are taken.

Furthermore, in a March 2025 blog post titled “Measuring AI Ability to Complete Long Tasks,” METR introduced the “50% task-completion time horizon”, the amount of time a human expert spends on a task that an AI can now complete successfully half the time.[11] This horizon has doubled roughly every seven months, showing that AI systems are rapidly extending the length and complexity of tasks they can perform autonomously. For policymakers, this offers a concrete way to track AI’s exponential progress and anticipate its impact on labor and regulation. But, like all exponential trends, it will eventually slow as limits in power, chip abundance / capability, and data availability constrain further growth.

That raises a deeper question, one that connects science to policy: if machines continue to shrink the amount of time needed to complete tasks that once required human effort, does that accelerating capability represent the creation of new knowledge? To explore this idea, we can look to a historical example of bounded but genuine algorithmic creativity: DeepMind’s AlphaGo and its now-famous Move 37.

FUTURE – A ROLE FOR HUMANS?

When AlphaGo, an AI built to play the east Asian game of strategy Go (or Baduk), played its now-legendary Move 37 against Lee Sedol in 2016, it shocked experts by playing differently than any human had ever played. Early in the match, AlphaGo placed a stone in an unexpected position that initially looked like a mistake but later proved brilliant. The move was a result of an algorithm that explored and refined patterns of play that humans had never considered. In other words, AlphaGo expressed creativity and advanced human knowledge.

However, there are still many areas of human knowledge in which AlphaGo’s game-playing creativity cannot be generalized. Human reasoning works differently than a machine’s. It operates in an open and fluid space, where meanings shift, and new ideas can emerge. Large language models, by contrast, navigate this space in discrete, statistical steps, governed by fixed meanings based on patterns in their training data. They can mimic the outward form of reasoning, but they do not inhabit the continuous, evolving space of meaning that characterizes human thought and language. A system like AlphaGo can look for new strategies, but it can only do so within fixed rules. The board and the rules remain constant, and what counts as a “good” move is determined by a fixed verification function.[12] Although there is great potential in AI systems which will be able to simulate human creativity in other fields, they will ultimately be constrained by some rulesets and method for verification of correctness. Human reasoning often works differently. Our logic is not bound to any single, fixed system of rules. We can invent new goals, new words for new ideas, and even new ways of deciding broadly how to define correctness. As such, the ability of AI systems to generate all truths is necessarily limited.

In other words, humans are not obsolete, and our imaginations will remain essential. Policy should therefore focus on fostering human-AI co-learning loops: environments where people learn to guide, critique, and refine algorithmic outputs, and where AI systems in turn help humans explore ideas, test hypotheses, and extend their cognitive reach. This requires sustained investment in education, research, and institutional practices that teach individuals how to work effectively alongside intelligent systems. It also demands a cultural shift that treats AI not as a replacement for creativity but as a partner. Policymakers should not fear AI. They should welcome it as a pathway to expand human flourishing.

APPENDIX A – MATH

Big O Notation.

When computer science theorists describe how efficiently an algorithm scales, they use Big O notation. It doesn’t show exact speed but the rate of growth: an O(n) process scales linearly, while O(n²) or O(2ⁿ) scales quadratically or exponentially respectively.

Big O Notation

Logistic Growth.

Mathematics also gives us tools for understanding systems that grow rapidly but cannot expand indefinitely. Logistic growth, for example, describes how populations, economies, or technologies accelerate quickly before slowing as they encounter limit constraints.

Incompleteness

In the early 20th century, mathematician Kurt Gödel proved that any logical system capable of expressing basic arithmetic contains true statements that cannot be proven within that system’s own rules. [13] In other words, no consistent set of axioms can ever capture all mathematical truth. This result reveals a deep limitation of formal reasoning, and by extension, artificial intelligence systems based on large language models which try to imitate it.

[1] Smil, Vaclav, Creating the Twentieth Century: Technical Innovations of 1867–1914 and Their Lasting Impact.

[2] Hilbert, Martin, and Priscila López. “The World’s Technological Capacity to Store, Communicate, and Compute Information.”

[3] Page, Lawrence, Sergey Brin, Rajeev Motwani, and Terry Winograd. “The PageRank Citation Ranking: Bringing Order to the Web.”

[4] Simon, Herbert A. “Designing Organizations for an Information-Rich World.”

[5] Imperva. Bad Bot Report 2023: The Unseen Cyber Threat.

[6] Krishnan, Naveen. 2025. Advancing Multi-Agent Systems Through Model Context Protocol: Architecture, Implementation, and Applications.

[7] PYMNTS. “Big Tech Charts Paths on AI, Infrastructure and Regulation.”

[8] Xu, Zhaoyuan, et al. “Yet Another Text Captcha Solver: A Generative Adversarial Network Based Approach.”

[9]Amodei, Dario, Chris Olah, Jacob Steinhardt, Paul Christiano, John Schulman, and Dan Mané. “Concrete Problems in AI Safety.”

[10] Schneier, Bruce. Secrets and Lies: Digital Security in a Networked World.

[11] Kwa, Thomas, Ben West, Joel Becker, et al. “Measuring AI Ability to Complete Long Tasks.”

[12] Silver, David, et al. “Mastering the Game of Go with Deep Neural Networks and Tree Search.”

[13] Gödel, Kurt. “Über formal unentscheidbare Sätze der Principia Mathematica und verwandter Systeme I.”